UX Roundup: Free UX Books | DoD Does Well | Design for Anthropomorphism | Overall Evaluation

- Jakob Nielsen

- Nov 20, 2023

- 4 min read

Summary: InVision offers 11 books about UX for free download | US Department of Defense AI deployment plan | How to design UX for the fact that users anthropomorphize AI | In analytics, define an Overall Evaluation Criterion that aligns with long-term business value, not short-term goals that undermine user retention | 7 Ways to Use AI in UX

UX Roundup for November 20, 2023. Happy Thanksgiving from all of us here at UX Tigers, especially to the 29% of subscribers who are in the United States. (“All of us” being just Jakob.)

Free UX Book Library

InVision has produced a library of 11 books about user experience that they have made available for free download. I have written many books myself and know how much work goes into even one book, so a set of 11 good books is a significant offering. Here’s the list of topics:

Animation Handbook

Business Thinking for Designers

Collaborate Better Handbook

Design Engineering Handbook

Design Leadership Handbook

Design Systems Handbook

Design Thinking Handbook

DesignOps Handbook

Enterprise Design Sprints

Principles of Product Design

Remote Work for Design Teams

Time to study: Go download one or two of the InVision UX books and then read them. Books do no good if they don’t leave the bookshelf. (My #1 advice for a young UX professional who is the sole UXer on a team is to engage in independent study after hours.) Bookshelf by Midjourney.

US Department of Defense AI Deployment Plan

Always hard to tell from the pronouncements of officialdom what’s actually happening on the ground, but it appears that the U.S. Department of Defense bureaucracy is resisting its natural centralizing tendencies in favor of a more fruitful decentralized strategy to deploy AI around the military.

The DoD does have a “Chief AI Officer” which seems appropriate, given the importance of AI to any human ventures, including the military. But in a press briefing, he stated that the DoD has rejected the idea of a centralized AI project. Instead, different parts of the military can experiment with creative applications of AI that make sense for their circumstances, including using available commercial tools. (“Not invented here” is otherwise a common budget-inflating plague.)

Unfortunately, the DoD still insists on centralized guidelines for these decentralized AI initiatives, which will no doubt slow them down and impede innovation. But generally, I feel encouraged by this development, which aligns with my general advice for doing AI:

Start small, and start now.

Run bottom-up AI projects, so users on the ground can discover the best applications of AI for their use cases.

Centralized military AI. Seems taxpayers might be saved from paying for such a misguided strategy since the DoD is luckily adopting a decentralized approach to AI. (Dall-E)

Design Implications of AI Anthropomorphism

As we know from user research, users anthropomorphize AI interactions at various intensities of connection, ranging from surface politeness to romantic involvement. What does that mean for future user interface design as more and more products come to exhibit AI capabilities?

Darren Yeo wrote an interesting essay exploring the role of UX/AI designers in addressing ethical and emotional challenges as AI becomes increasingly anthropomorphic.

With AI and conversational UI everywhere, technology products are going anthropomorphic. (Dall-E)

Yeo opens with a captivating analogy, comparing the evolution of AI to the story of Pinocchio, a wooden puppet that desires to become human. This serves as a framework for discussing the ethical and emotional challenges that UX/AI designers must navigate.

He proceeds to identify three key challenges that designers face: the uncanny valley effect, pareidolia of consciousness, and AI hallucination:

The uncanny valley effect refers to the emotional discomfort people experience when interacting with an AI that is almost, but not quite, human-like.

Pareidolia of consciousness is the phenomenon where people perceive consciousness in AI systems where there is none.

AI hallucination involves the AI system generating plausible but incorrect or nonsensical answers.

Yeo argues that addressing these challenges requires a transdisciplinary approach, one that incorporates both aesthetic and ethical considerations into the design process. He concludes by advocating for a multimodal design approach, which involves integrating AI features into existing digital products while maintaining congruency with newer applications, ensuring a harmonious interconnection of various systems.

Ultimately, we need companies to design and ship such systems and then subject them to independent usability testing and field research to really find out what works. Speculating is good, but data is better. Internal usability findings collected during an iterative design process are good, but independent usability insights across multi-product studies are better.

Hallucinations are a well-known problem of AI. Who was at that party again? Image by Dall-E.

Overall Evaluation Criterion in Analytics

I continue to be impressed with Ronny Kohavi’s depth of thinking about analytics. He posted a set of slides from a recent talk about A/B testing. Usually, I say that slides can’t substitute for a live speaker, and I’m sure that’s also true here. Still, since the presentation has already happened, the slides are second-best and readily available online.

I particularly like the section about the “Overall Evaluation Criterion:” What should we optimize for? Too many people optimize for low-level outcomes, but that’s like a dog chasing its tail. Even if you catch it, you’ll be disappointed.

Don’t optimize for short-term gains like clicks or immediate revenue. Instead, focus on metrics that provide long-term value, such as customer lifetime value. Vanity metrics and lagging indicators should be avoided; instead, rely on critical metrics like acquisition, activation, retention, revenue, and referral.

When choosing an Overall Evaluation Criterion, it's essential to consider its scope and impact. For example, in an email system, focusing solely on revenue generated from clicks leads to spamming, as the metric only goes up and up the more emails you send your long-suffering customers. Metrics must reflect user satisfaction and long-term value, not just immediate gains. Therefore, the OEC isn't just a metric; it’s a strategic decision that demands careful thought and consensus.

If the dog catches its tail, it’ll go “Ouch.” Your business will have a similar outcome if you succeed in optimizing your design for short-term metrics that undermine customer retention. (Dog by Leonardo.)

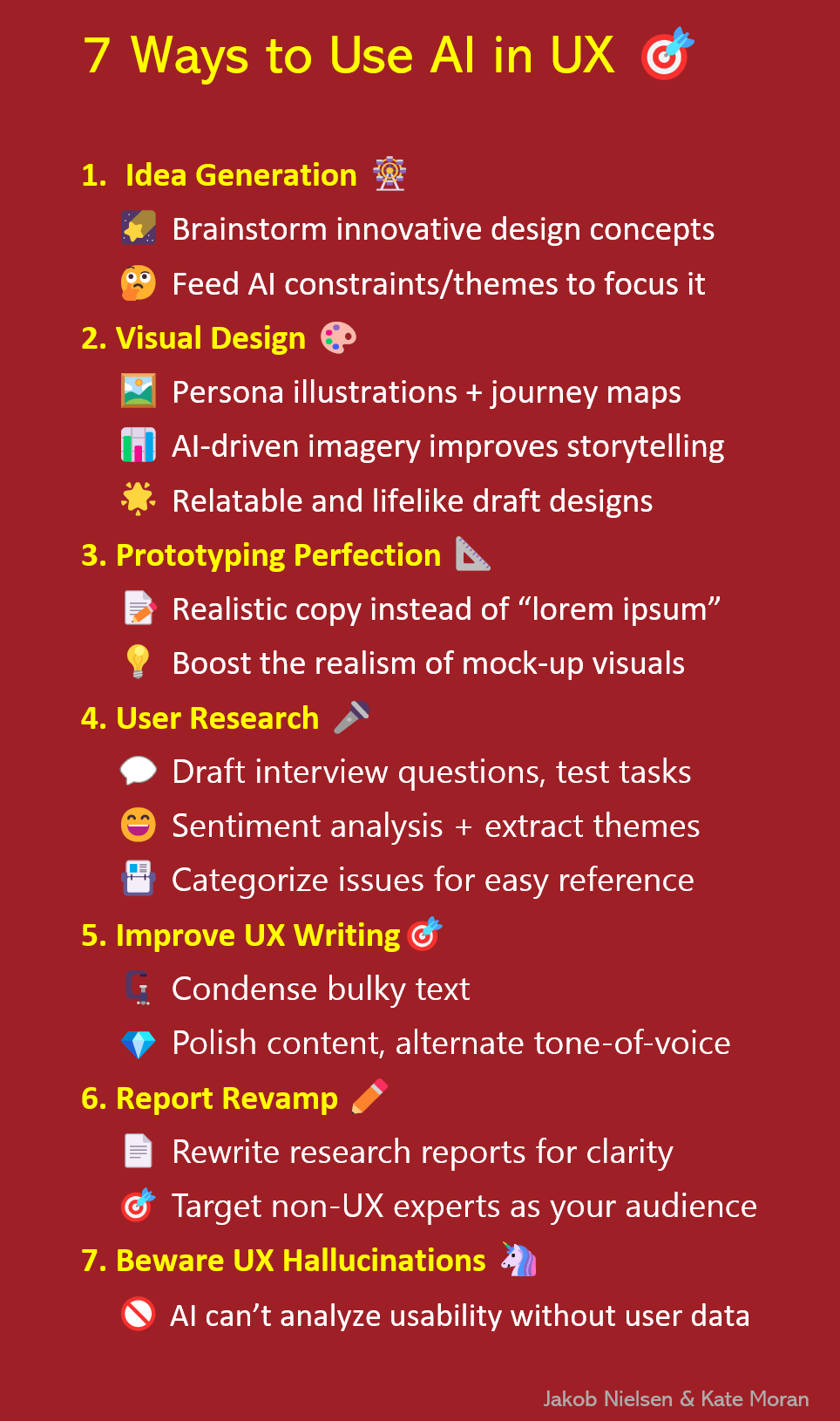

7 Ways to Use AI in UX

Getting Started with AI for UX: Use generative-AI tools to support and enhance your UX skills — not to replace them. Start with small UX tasks, and watch out for hallucinations and bad advice.

The full article has much more detail, but here’s an infographic summarizing 7 things you can do with AI to improve your work as a UX professional:

Feel free to copy or reuse this infographic, provided you give https://jakobnielsenphd.substack.com/p/get-started-ai-for-ux as the source.